How to configure multinode Simulation?

Hi,

In the project I'm working we are trying to perform large scale what-if simulations. We have almost 1000 propositions and 1 million customers.

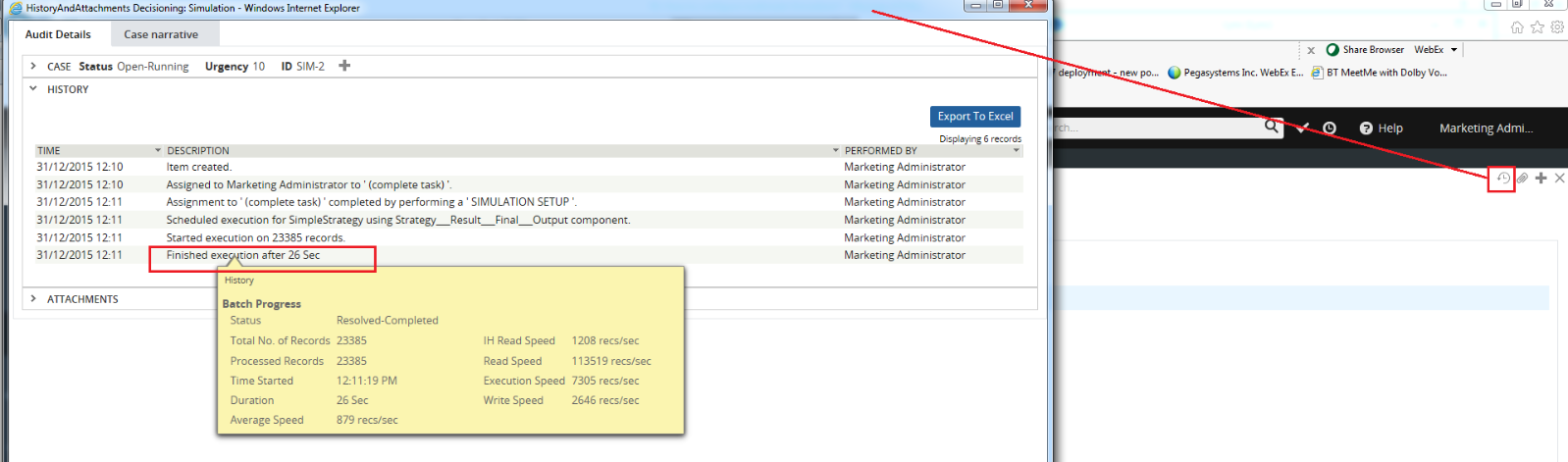

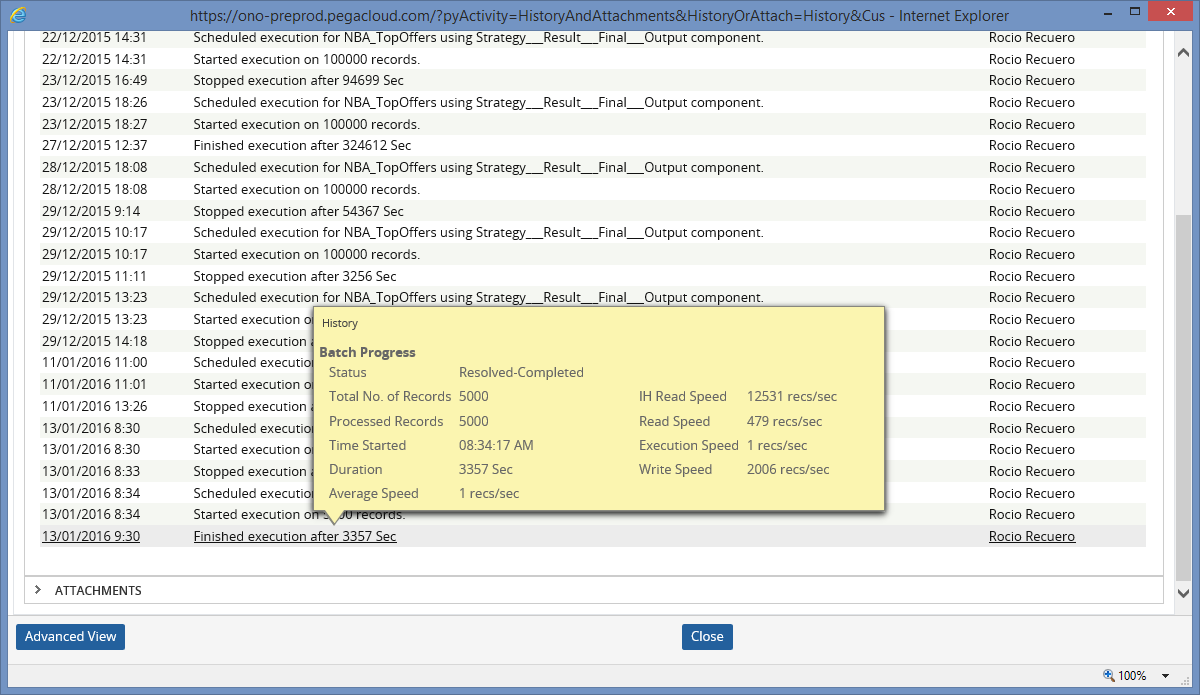

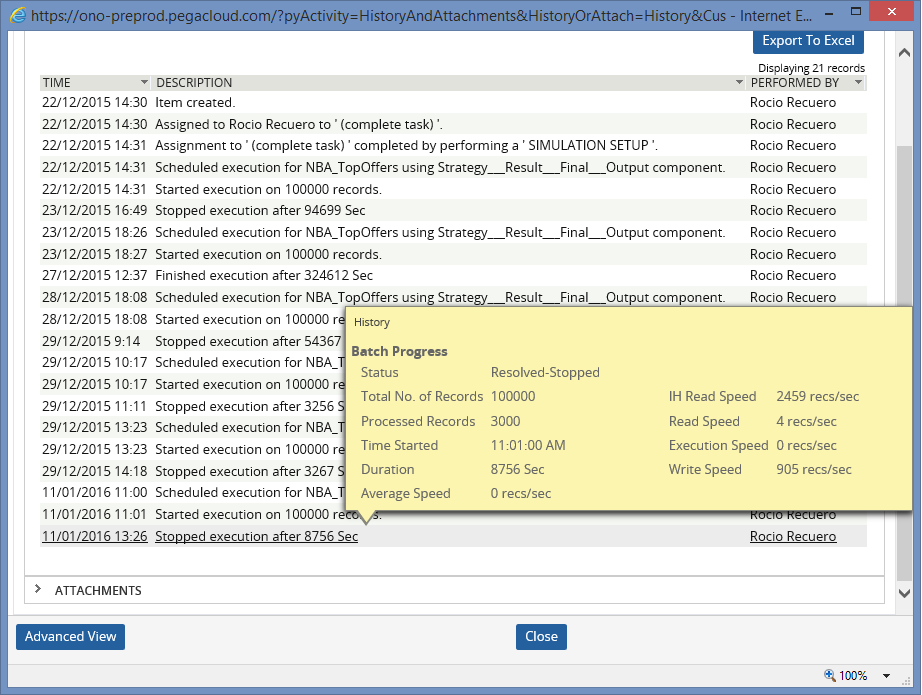

If we run single-node simulation for 100.000 customer to se what would happen if some changes go live, the full simulation spend close to 10h.

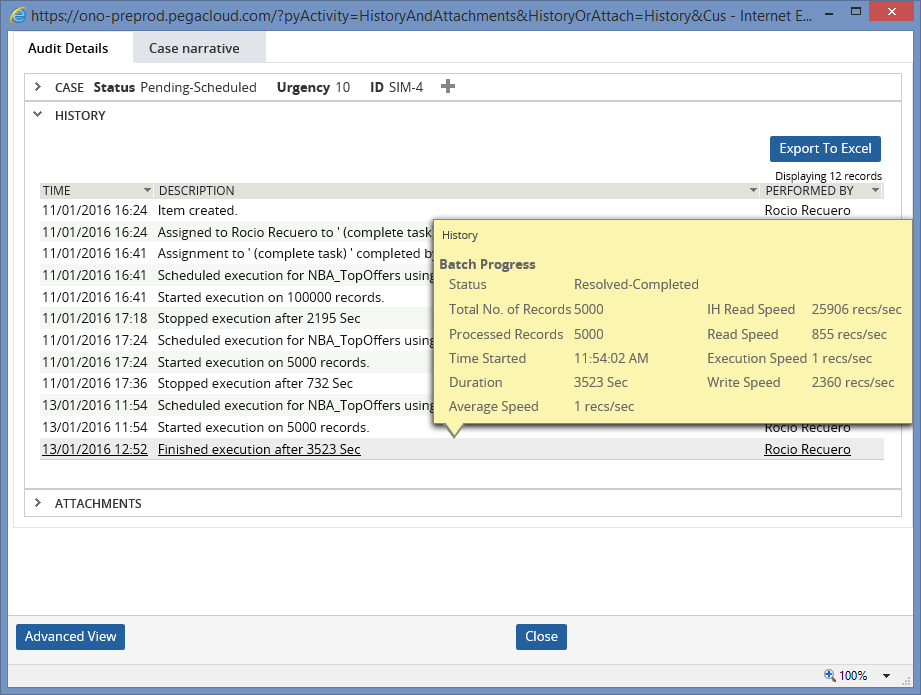

We have tried to configure our pre-prod environment to run multi-node simulations following instructions from Pega Training Course "Decisioning Simulations for System Architects 7.1" and also "DSM Reference Guide 7.1.7", but something should be missed or wrong because the performance has increased dramatically (currently maybe could be near to 2 or 3 days).

Our preprod environment has 2 servers with 2 nodes each one.

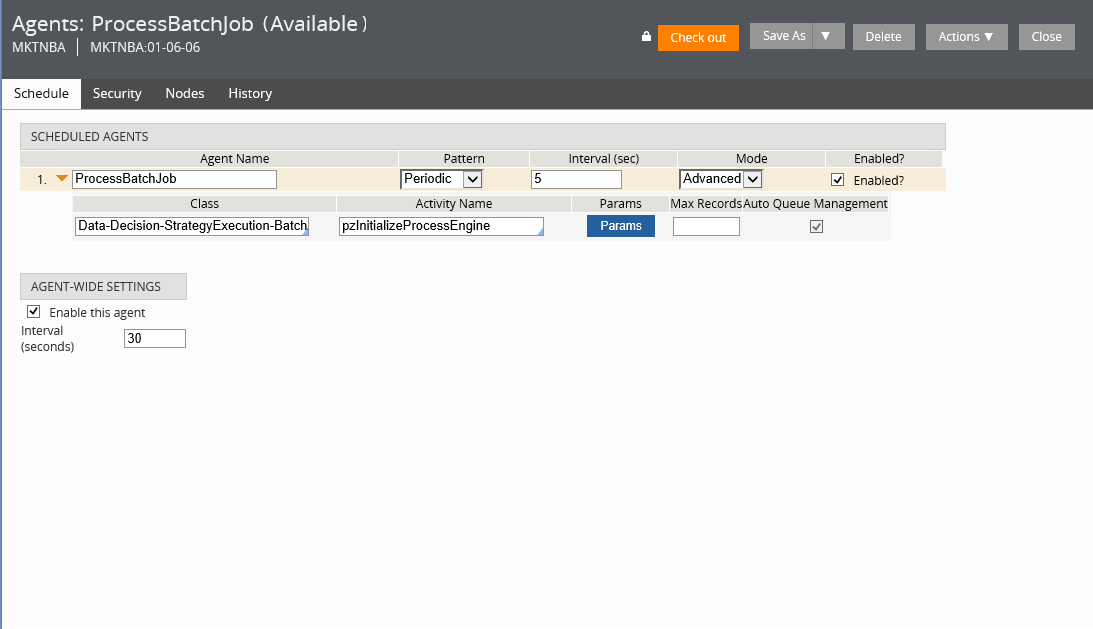

First of all what I've done is create a new "ProcessBatchJob" Agent in my application (as image below), and verify in SMA it was running:

Hi,

In the project I'm working we are trying to perform large scale what-if simulations. We have almost 1000 propositions and 1 million customers.

If we run single-node simulation for 100.000 customer to se what would happen if some changes go live, the full simulation spend close to 10h.

We have tried to configure our pre-prod environment to run multi-node simulations following instructions from Pega Training Course "Decisioning Simulations for System Architects 7.1" and also "DSM Reference Guide 7.1.7", but something should be missed or wrong because the performance has increased dramatically (currently maybe could be near to 2 or 3 days).

Our preprod environment has 2 servers with 2 nodes each one.

First of all what I've done is create a new "ProcessBatchJob" Agent in my application (as image below), and verify in SMA it was running:

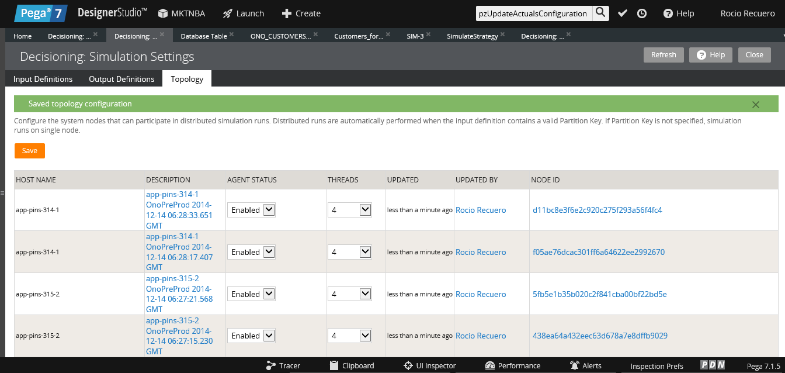

After that I could modify Topology settings to set them as in the first image (note: I tried with 2 threads per node and there is no meaningfull performance difference).

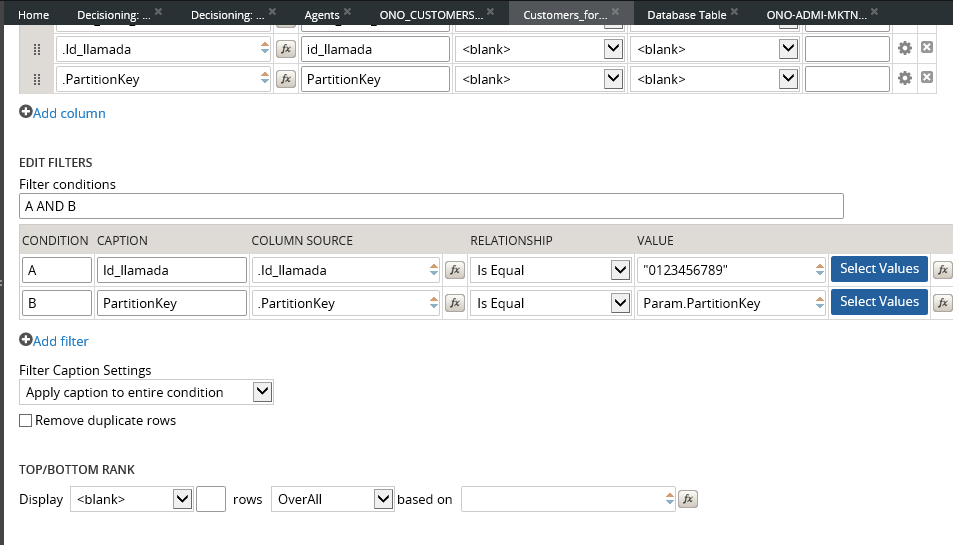

Then, following training course instructions, I created in customer database table a new column named "PartitionKey", numeric and I set random values between 1 and 10. Then created Property in customer Pega Class and re-mapped with database table.

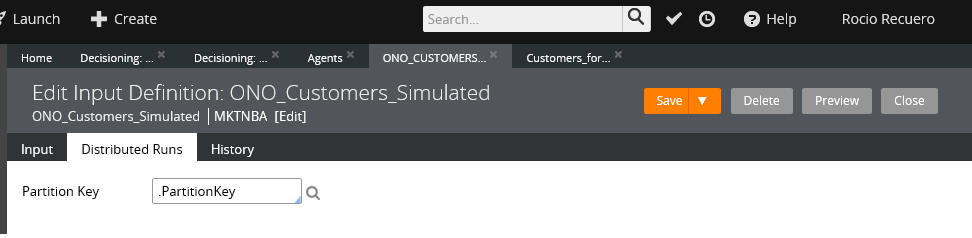

And lastly I modified the Input Definition setting PartitionKey Customer's Property in partition Key field in Distributed Runs, and also modified Report Definition to add "Partition Key Parameter" and

Do you have any idea about what would be happening? Or let me know if you need more information to clarify something.

Regars,

Rocío