Question

Last activity: 17 Jul 2017 16:44 EDT

How do I use pxNextKey for second Obj-Browse?

Hi all,

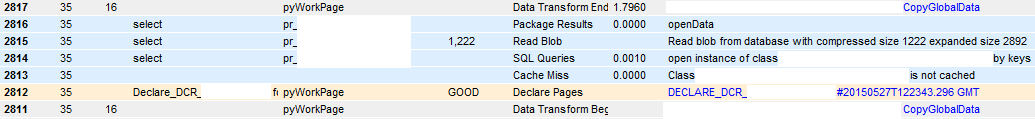

I am currently optimizing a DB query which takes forever. The objective is to retrieve 5.000 to 15.000 Rows of the database via Obj-Browse in an activity. By measuring performance for 6.000 rows I noticed that the Obj-Browse is not taking the most time (~5-10 seconds), but the following "Append and map to" step which copies the retrieved data from the result page into the workobject (20-30 minutes!). The performance is not scaling linearly which means processing only 1.500 rows can easily be processed in 1,5 minutes.

My next idea would be "chunking" the data so the result page won't be as big as the target page list of the workobject. I want to to Obj-Browse for only 1.000 or 1.500 rows and append the result page to my workobject and repeat this until I processed all rows. As a parameter in the activity I can specify a max. records for the Obj-Browse step - but how do I proceed with the next iteration? How do I use pxNextKey to start browsing the next time with the 1.001th row?

Thanks in advance and best wishes!

***Updated by moderator: Marissa to close post***

This post has been archived for educational purposes. Contents and links will no longer be updated. If you have the same/similar question, please write a new post.