How to manually commit offset after consuming from kafka

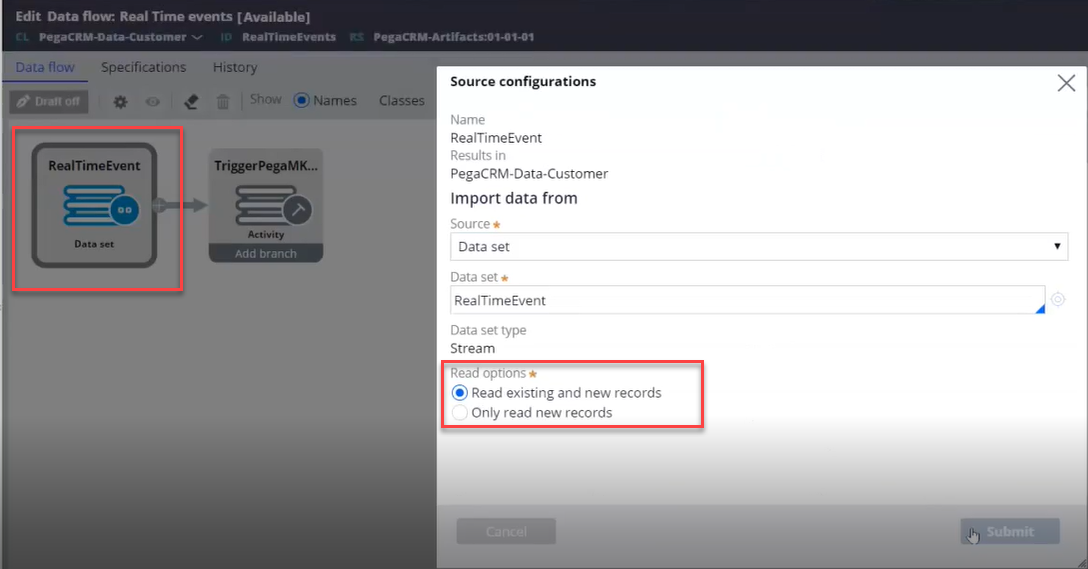

I am able to receive message from Kafka data-set and store in database using Data-flow.But if there is any issue in data flow while reading a message ,and if we restart the data flow will it read from the last successful message or how it works in pega .Is there any way to manage the consumer offset manually ?

***Edited by Moderator: Pallavi to change content type from Discussion to Question***

***Edited by Moderator Marissa to update Pega Academy to General; update Platform Capability Tags***