Question

Financial Services

AU

Last activity: 16 May 2017 5:24 EDT

OCR Integration with Pega 7

Hi there,

Just looking for advise/suggestion on OCR integration. Use case is: We want to be able to scan an image (Drivers license) and pre-populate the application form from the scanned document. Looking for software which was used, whether it is possible or not etc.

Thanks,

Jai

-

Like (0)

-

Share this page Facebook Twitter LinkedIn Email Copying... Copied!

Accepted Solution

Updated: 7 Dec 2015 11:41 EST

I've successfully built out a proof-of-concept level integration with Tesseract via the Tess4J library. It's well beyond out-of-the-box Pega functionality, but Tesseract is one of the most efficient and accurate OCR implementations available... and it's open source.

Since Tesseract is a native library (compiled code / .dll or .so binary) it requires installation on the server machine, which will vary based on OS and processor architecture. The final result is a system that looks something like this, where parenthesis represent "wrapping" or calling:

Data Transform(Function Rule(Java Class(JNA(Tesseract Library(image file)))))

Tess4J handles the Java Class and JNA portions of the architecture, which would be the most challenging pieces for a Pega Developer. Prior to calling the function, the image file needs to be written to disk in a location accessible to the Tesseract library. For attachments, this means they need to be extracted from the database and written as a java.io.File object. Once OCR has been performed, the file can be deleted.

I've successfully built out a proof-of-concept level integration with Tesseract via the Tess4J library. It's well beyond out-of-the-box Pega functionality, but Tesseract is one of the most efficient and accurate OCR implementations available... and it's open source.

Since Tesseract is a native library (compiled code / .dll or .so binary) it requires installation on the server machine, which will vary based on OS and processor architecture. The final result is a system that looks something like this, where parenthesis represent "wrapping" or calling:

Data Transform(Function Rule(Java Class(JNA(Tesseract Library(image file)))))

Tess4J handles the Java Class and JNA portions of the architecture, which would be the most challenging pieces for a Pega Developer. Prior to calling the function, the image file needs to be written to disk in a location accessible to the Tesseract library. For attachments, this means they need to be extracted from the database and written as a java.io.File object. Once OCR has been performed, the file can be deleted.

If only a portion of the file needs to be read, Tesseract supports passing in the coordinates of a rectangle representing the area of the image to be read. Alternatively, the image could be cropped prior to running OCR on it.

Pegasystems Inc.

IN

Hello,

I am afraid, unfortunately, we don't have any tool as yet that supports the integration with OCR/ICR software.

If you want to do the scanning from the application itself, you can refer this KB Article : http://pdn.pega.com/node/11722

Then you can follow the steps mentioned here to read the scanned item : https://community.pega.com/integration/pega-content-management-capture-store-and-view-content

Accepted Solution

Updated: 7 Dec 2015 11:41 EST

I've successfully built out a proof-of-concept level integration with Tesseract via the Tess4J library. It's well beyond out-of-the-box Pega functionality, but Tesseract is one of the most efficient and accurate OCR implementations available... and it's open source.

Since Tesseract is a native library (compiled code / .dll or .so binary) it requires installation on the server machine, which will vary based on OS and processor architecture. The final result is a system that looks something like this, where parenthesis represent "wrapping" or calling:

Data Transform(Function Rule(Java Class(JNA(Tesseract Library(image file)))))

Tess4J handles the Java Class and JNA portions of the architecture, which would be the most challenging pieces for a Pega Developer. Prior to calling the function, the image file needs to be written to disk in a location accessible to the Tesseract library. For attachments, this means they need to be extracted from the database and written as a java.io.File object. Once OCR has been performed, the file can be deleted.

I've successfully built out a proof-of-concept level integration with Tesseract via the Tess4J library. It's well beyond out-of-the-box Pega functionality, but Tesseract is one of the most efficient and accurate OCR implementations available... and it's open source.

Since Tesseract is a native library (compiled code / .dll or .so binary) it requires installation on the server machine, which will vary based on OS and processor architecture. The final result is a system that looks something like this, where parenthesis represent "wrapping" or calling:

Data Transform(Function Rule(Java Class(JNA(Tesseract Library(image file)))))

Tess4J handles the Java Class and JNA portions of the architecture, which would be the most challenging pieces for a Pega Developer. Prior to calling the function, the image file needs to be written to disk in a location accessible to the Tesseract library. For attachments, this means they need to be extracted from the database and written as a java.io.File object. Once OCR has been performed, the file can be deleted.

If only a portion of the file needs to be read, Tesseract supports passing in the coordinates of a rectangle representing the area of the image to be read. Alternatively, the image could be cropped prior to running OCR on it.

Pegasystems Inc.

DE

Hi Zane,

I have a customer with the same requirement.

Do you have any screenshots / videos / documentation / RAP etc. of your PoC you can share?

Especially some screenshots would be really helpful.

Best regards,

Florian

Updated: 6 Apr 2016 13:45 EDT

Pegasystems Inc.

DE

Update:

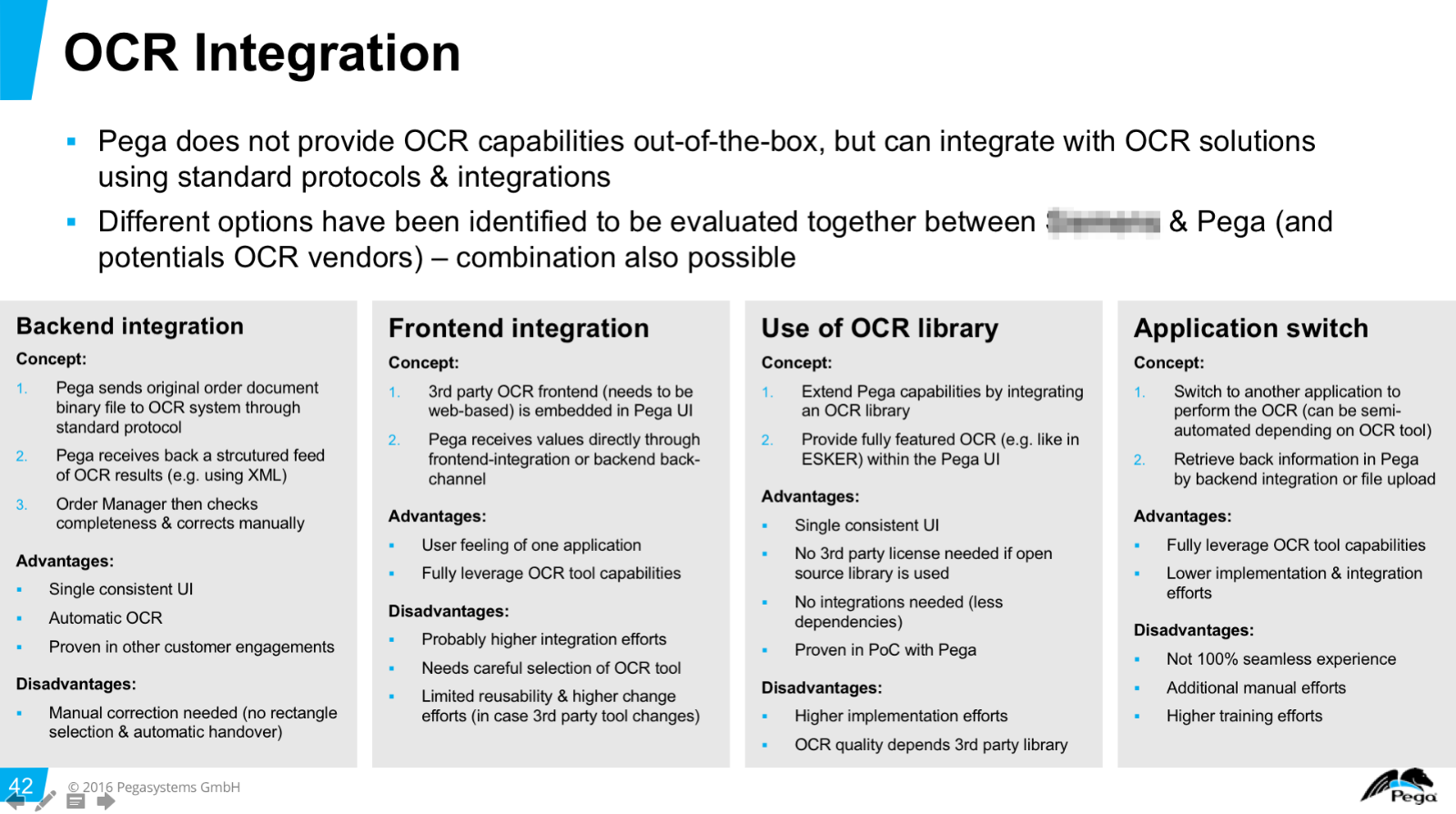

Unfortunately I was not able to find much more information than what is discussed on this thread, but there are different options available:

- Backend-based integration with OCR system (e.g. we put an image file / pdf somewhere or hand it over via web service to the OCR system and receive a structured system readable response back with the results)

- Fronted-based integration with OCR system (we integrate a web-based / plugin based UI into Pega and communicate through JavaScript + maybe backend based integration in addition to that)

- Native OCR implementation with 3rd party library in Pega (i.e. based on Tesseract / Tess4J like discussed in this thread)

- Application switch (worst option in terms of user experience, but easiest from implementation point of view and in order to save existing investments --> i.e. the user switches to the OCR application, uploads the file manually and then either receives an XML / CSV file as a result which he manually uploads in Pega or puts it into a folder with the Case ID in the filename and Pega picks it up)

Here are the options we discussed:

Update:

Unfortunately I was not able to find much more information than what is discussed on this thread, but there are different options available:

- Backend-based integration with OCR system (e.g. we put an image file / pdf somewhere or hand it over via web service to the OCR system and receive a structured system readable response back with the results)

- Fronted-based integration with OCR system (we integrate a web-based / plugin based UI into Pega and communicate through JavaScript + maybe backend based integration in addition to that)

- Native OCR implementation with 3rd party library in Pega (i.e. based on Tesseract / Tess4J like discussed in this thread)

- Application switch (worst option in terms of user experience, but easiest from implementation point of view and in order to save existing investments --> i.e. the user switches to the OCR application, uploads the file manually and then either receives an XML / CSV file as a result which he manually uploads in Pega or puts it into a folder with the Case ID in the filename and Pega picks it up)

Here are the options we discussed:

The final decision will be made during the inception of the project and I will post an update then - and later based on the implementation.

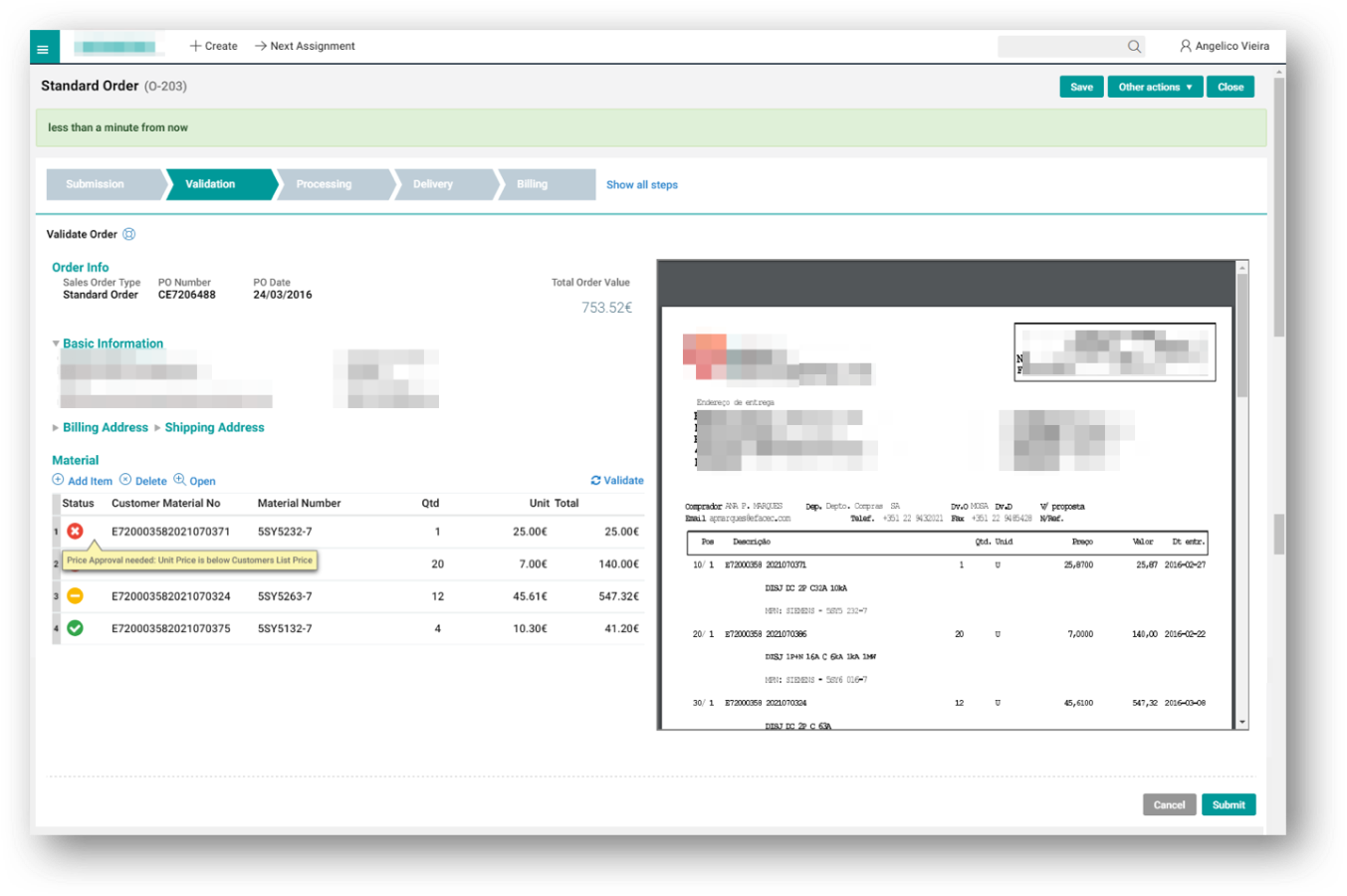

For our vision demo, we simulated the OCR part, but included how the UI would look like with options 1-3.

In this case a PDF order would have been OCR'd / parsed already and shown to the Order Manager for verification & validation (we are showing the results on the left hand side and the original PDF document on the right). This would be the case with option 1, 2 and 3, but the actual capabilities would differ (e.g. with option 2 and 3 we would have the possibility to interact on the UI, e.g. select something visually in the PDF and then automatically generate a new order item on the left - with option 1 (backend integration only) this would be a manual change when it comes to the validation step).

Financial Services

AU

Apologies for not replying to your message Zane Beckman . We started looking at Tessaract and Microblink as well. We are also exploring some options with Kofax & Jumio. Thanks for your suggestion.

Cognizant Technology Solutions

AU

Hi,

Does PEGA support OOTB capability to integrate with Kofax, according to https://www.pega.com/about/news/media-coverage/kofax-and-pegasystems-partner it does.

I'm not able to see any references on PDN/ mesh for Kofax. Could you please provide your thoughts on this integration capability? Appreciate your help.

Thanks,

Sadaan

AGCS

DE

Check this link... Pega SFA has OCR integrated into it now. This is a live demo.

BlueRose Technologies

GB

Is there any step by step guide, which explains the feature in Pega SA and how to use it?