Question

Barclays

GB

Last activity: 19 Oct 2021 8:03 EDT

pxResults Page list reached to 10K limit

Hi Folks, I have been working on a requirement where I have to process lot of records say PriceList and the limit can go up to 25K records. Now after developing the solution when I am doing my unit testing to check the threshold of the code, Initially I observed that I am not able to see few records in the PriceList.pxResults() after certain records and when looked at the pxResults I realized that it is not holding records after reaching 10K count.

Is there any way to accommodate all the results in the page list without exhausting or altering the pxResults? I am OK to change the design as well. Right now, I am getting the data from an external database via RDB query (obviously not in a one go) and appending them in a clipboard page (PriceList.pxResults) and I must show these PriceList records on the UI for further processing.

Appreciate any help here!! Thanks!

-

Like (0)

-

Share this page Facebook Twitter LinkedIn Email Copying... Copied!

Accepted Solution

Updated: 13 Jul 2020 11:59 EDT

Barclays

GB

Thanks John. This is informative and I am aware this feature in Report Definition but for my requirement I am not using RD and making use of Obj-Browse.

Actually it was a misunderstanding from my side I didn't do a proper research. Obj-Browse method by default pull max of 10K records only when you don't specify any MaxRecords and this can be overridden by specifying a number or if you will put as '0', it will pull all the qualifying record. So in my case specifying MaxRecords param value as '0' resolved the issue.

Infomatics Corp

US

Hi Abhinav,

Is your requirement is to show all 25K Records in Single Page? If there is a Pagination then you can leverage the Pagination Activity to load the records and Manipulate the Clipboard.

Question:-

Is Your requirement is that user going to edit the 25K Records on UI for further Processing in the flow?

-

Abhinav Chaudhary

Updated: 11 Jul 2020 23:42 EDT

Barclays

GB

Thanks Abhilash for your valuable input.

to answer your question - No, I am using pagination to show records in multiple pages, but the records which I display on the UI, that is based off the offers I have selected on a previous page and based on those offers only I am pulling an preparing the price list pagelist to show the records.

Yes, I have a functionality where user may duplicate an individual row on the UI or if they want they may download the data in a excel and edit it offline and again come back and upload the data for further processing and ultimately I am using that data to store in S3 bucket for further uses.

Maantic Inc

US

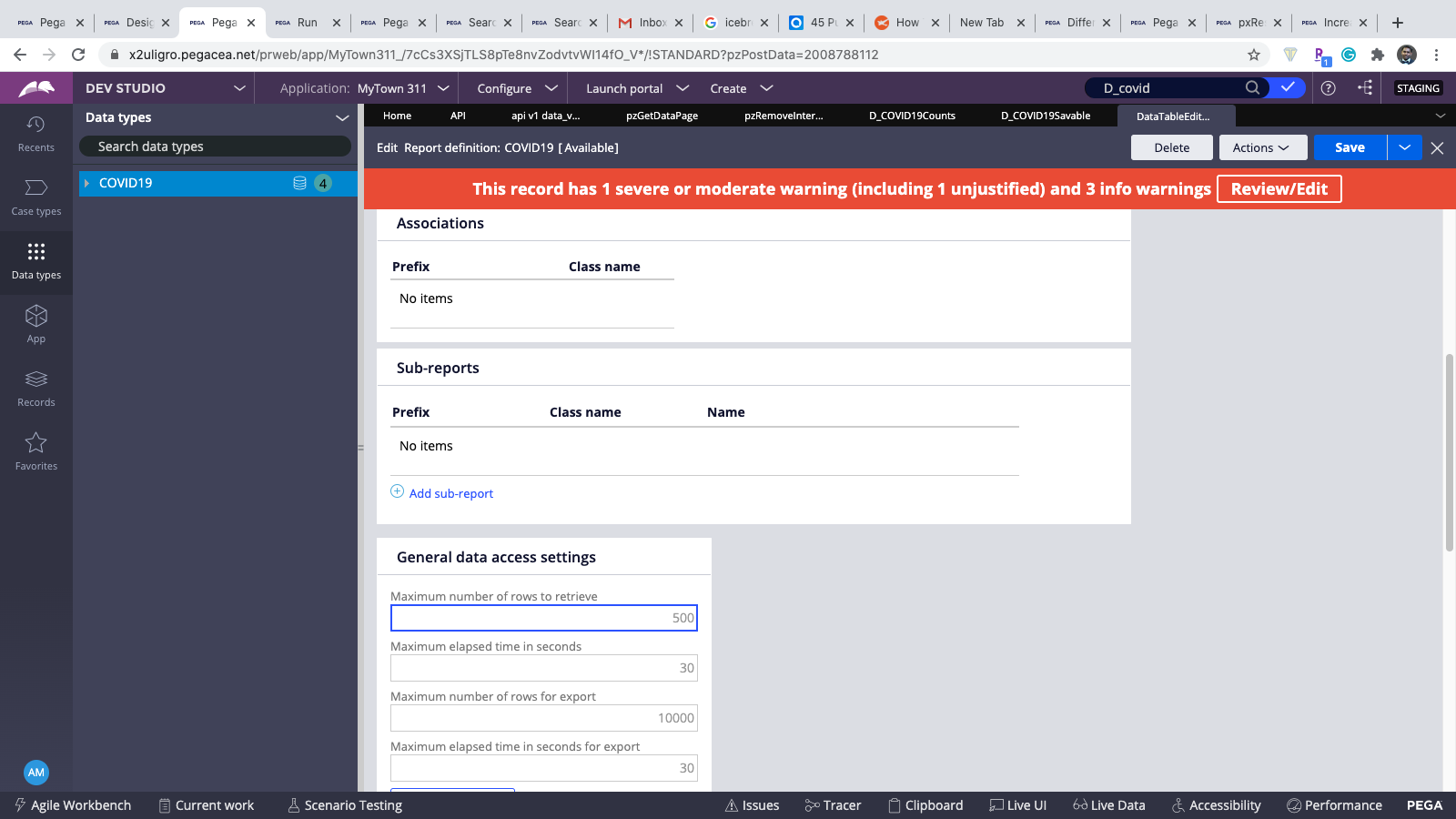

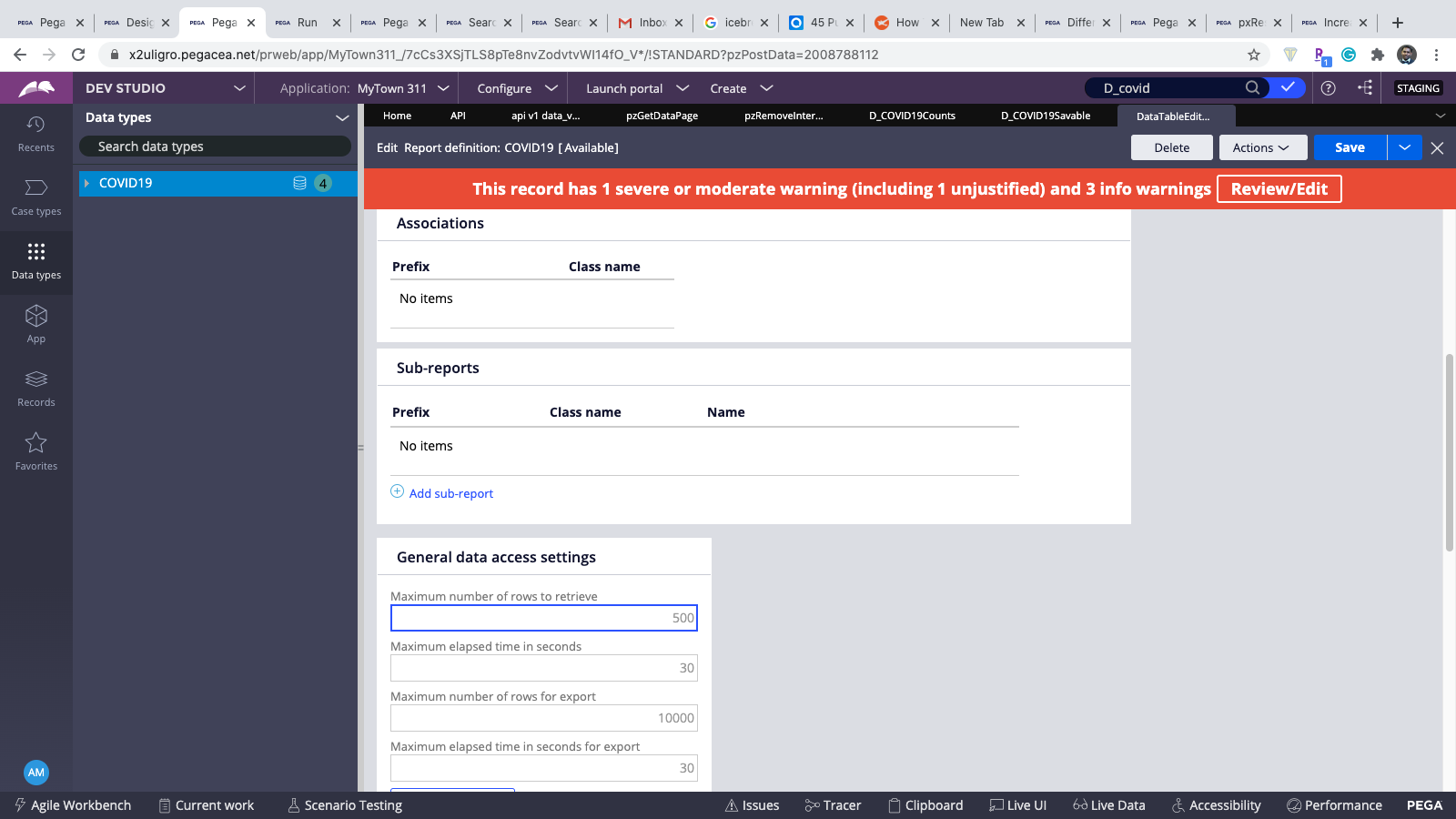

Hi Abhinav, Did you check your Report definition data access tab configuration.

Default value is 500, but you can update based on your required number.

Navigation Details (screen prints from v84)

Open Report definition - go to data access tab - under General data access settings - Maximum number of rows to retrieve

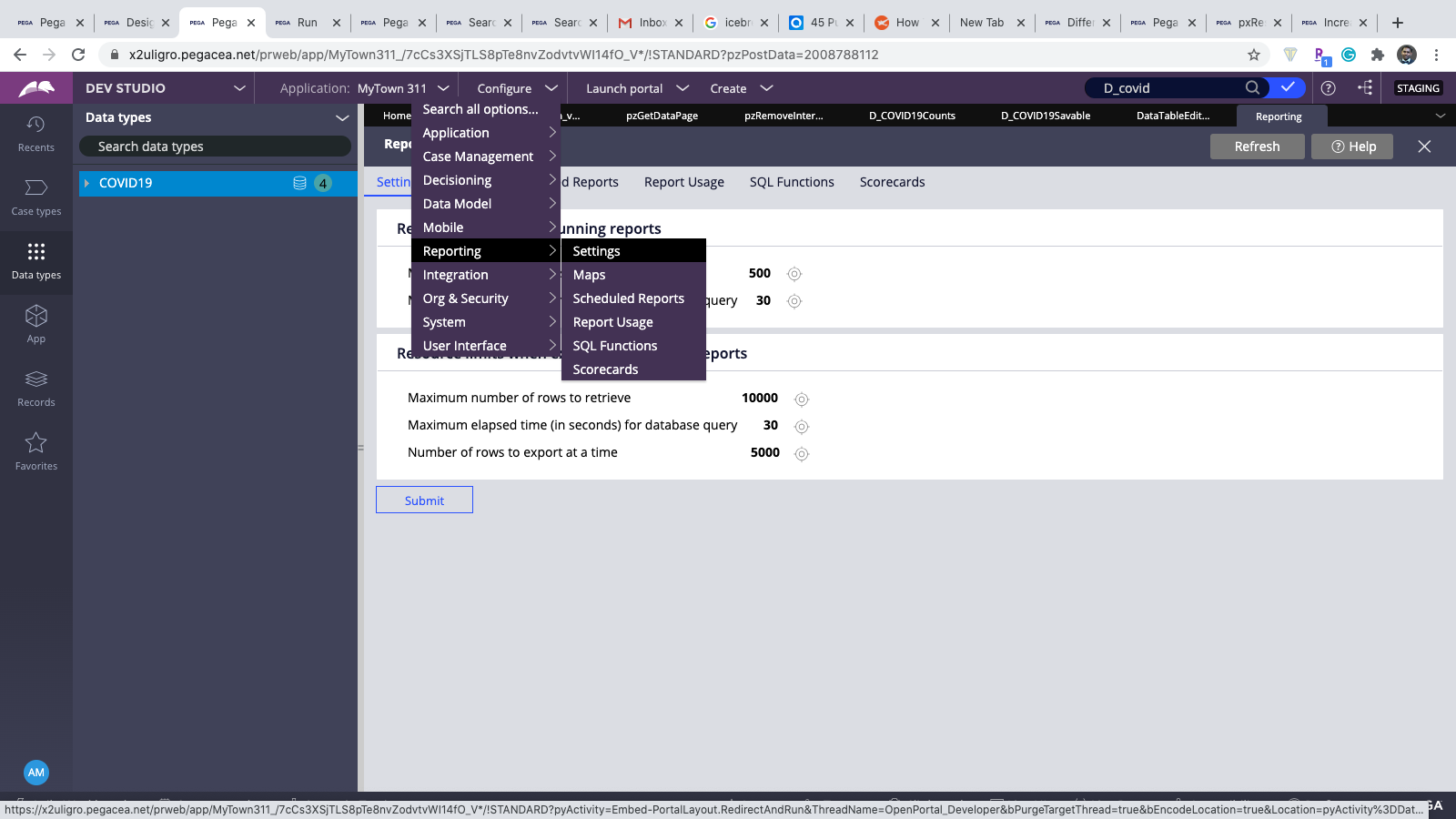

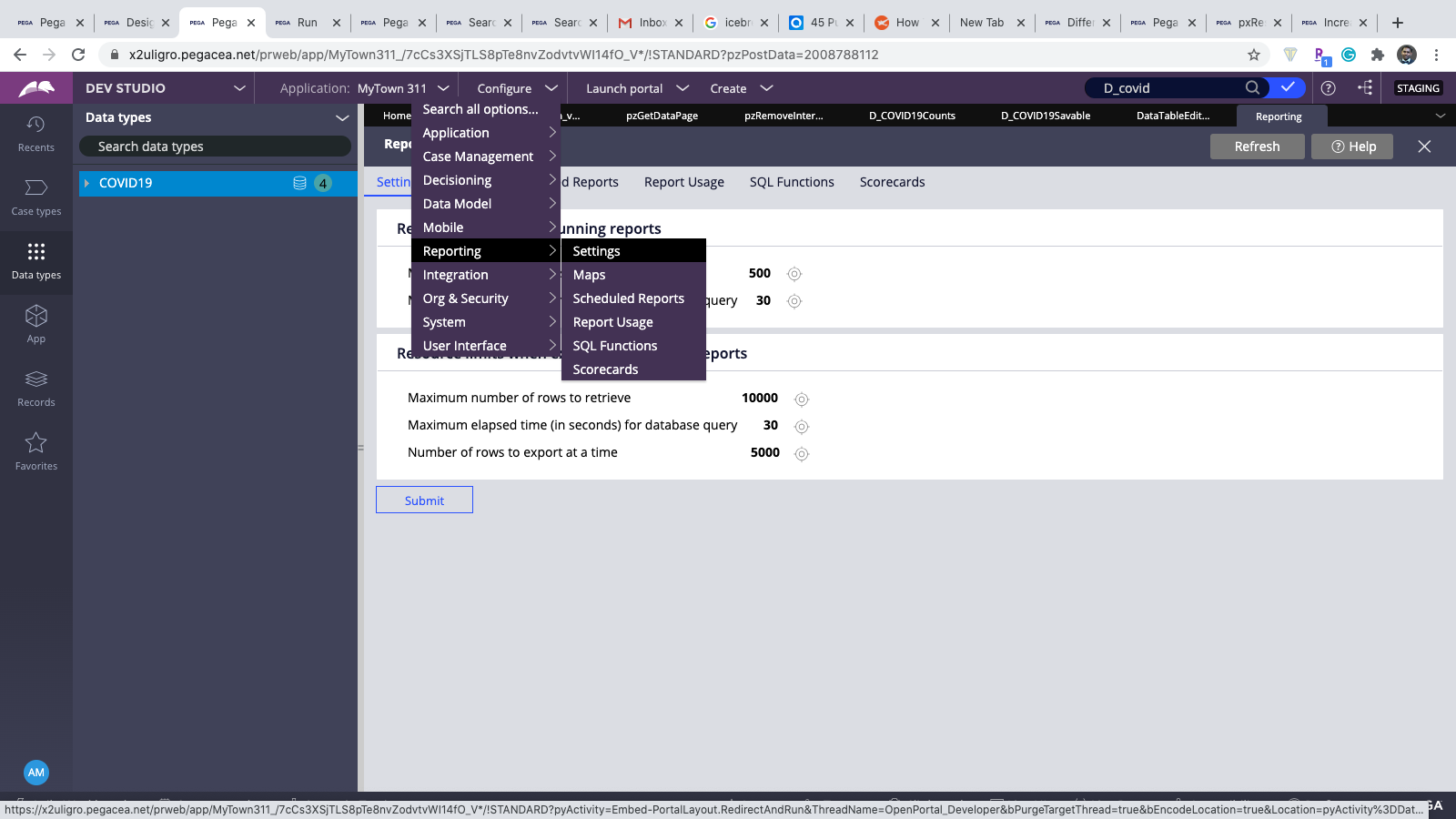

Other Navigation to Report settings options is

Click on target icon in Maximum number of row to retrieve - if you update in application settings it will reflect globally for all the report definition in the system.

Hi Abhinav, Did you check your Report definition data access tab configuration.

Default value is 500, but you can update based on your required number.

Navigation Details (screen prints from v84)

Open Report definition - go to data access tab - under General data access settings - Maximum number of rows to retrieve

Other Navigation to Report settings options is

Click on target icon in Maximum number of row to retrieve - if you update in application settings it will reflect globally for all the report definition in the system.

-

Abhinav Chaudhary

Accepted Solution

Updated: 13 Jul 2020 11:59 EDT

Barclays

GB

Thanks John. This is informative and I am aware this feature in Report Definition but for my requirement I am not using RD and making use of Obj-Browse.

Actually it was a misunderstanding from my side I didn't do a proper research. Obj-Browse method by default pull max of 10K records only when you don't specify any MaxRecords and this can be overridden by specifying a number or if you will put as '0', it will pull all the qualifying record. So in my case specifying MaxRecords param value as '0' resolved the issue.

-

Uddhao Mhaske Raghavendra Peddabudi

Infomatics Corp

US

Yes there is no limitation on the Clipboard on how many records it can hold, but having too much data on the Clipboard would impact the JVM performance.

ListView,

RD,

Obj-Browse (All this reports passing the value as 0 will pull all the records)

LTIMindtree

IN

How can I improve the performance or is there any other alternate way of loading or exporting to excel sheet 20K rows without compromising the performance USING the Report Definition.

Please answer...

Updated: 12 Jun 2021 7:16 EDT

Barclays

GB

@RaghaV I had a similar requirement where end users supposed to download almost 50K records in an excel. To process it in real time was a hit on performance. So we went ahead with the Job scheduler and whenever we identified that the record count is more than 5K, we processed it through job scheduler and pushed to end user in form of an email attachment.

We are on Pega 8.1.0

-

Raghavendra Peddabudi Renan Sucupira

LTIMindtree

IN

Thank You @Abhinav_Chaudhary We did the same. It helped a lot.